What it was.

Standard web VR approaches often either take over the whole scene or feel visually detached from the host environment.

I built a bridge between Unity tracking and Three.js rendering so web content could inherit the headset pose and projection logic of the surrounding application.

That required solving both architectural and mathematical alignment problems across runtimes.

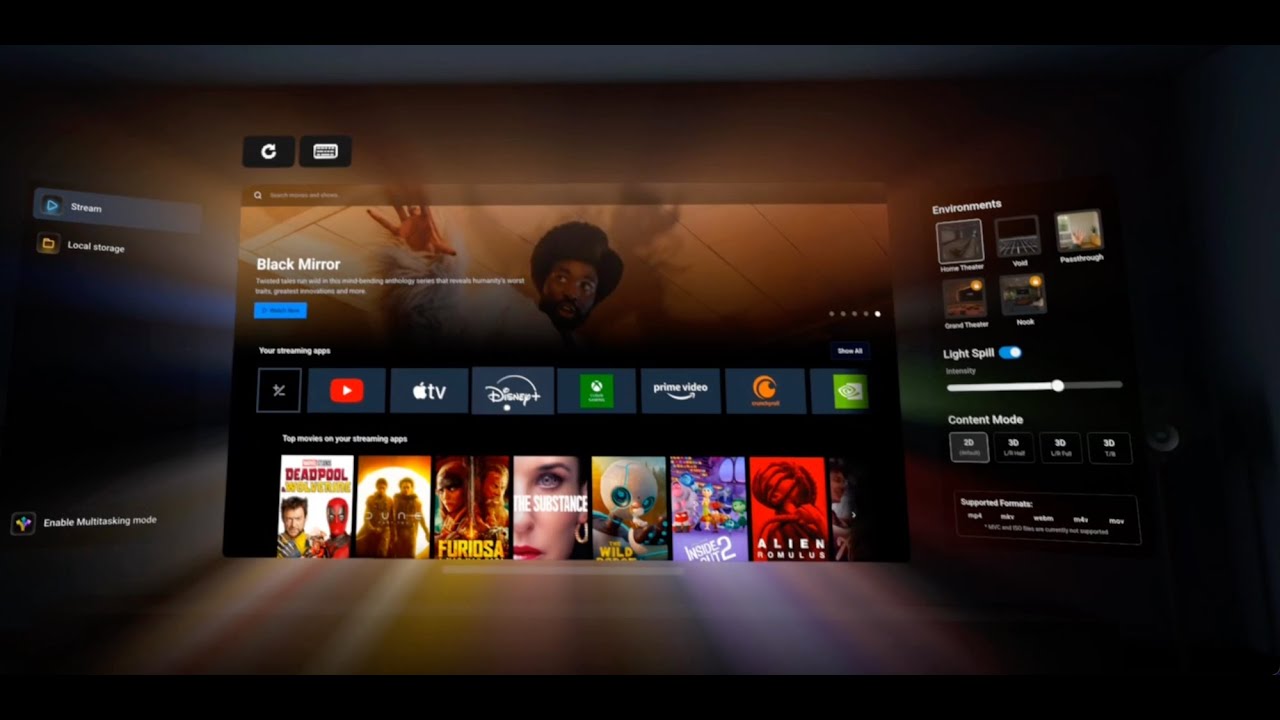

The payoff was web content that felt more physically grounded, which made later interaction and media work more convincing.

The result was a Unity-to-Three.js stereo bridge with realtime pose streaming and more convincing depth behavior for embedded web content.